-

Bug

-

Resolution: Done

-

High

-

London Release

-

None

-

None

Current behavior

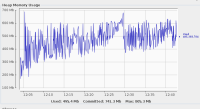

During the testing with high amount of cmhandles it turned out that sending in the cmhandles to NCMP extremely slowed down and have memory usage problems.

It is visible that sending in the first batches are around 1-2 seconds, the last batches are more than 2 minutes.

20000 cmhandles were sent in in 100 sized batches (200x100)

If we use our default Java heap settings than the NCMP logs are full with "OutOfMemoryError: Java heap space" errors, almost all of the background processes complaining about this.

Sending in the 20k cmhandles took more than 3.5 hours however it was around 20 minutes before.

The default memory usage settings:

- requested memory 2Gi

- memory limit 3Gi,

- From these the java heap is around 750MB

If we increase the java heap to 1500 MB than there are significantly less out of memory errors, but still working with 100% memory usage.

Sending in the 20k cmhandles still took more than 3.5 hours however it was around 20 minutes before.

Increased java heap settings:

- requested memory 2Gi

- memory limit 3Gi,

- From these the java heap is around 1500MB

Tried with and without hazelcast configuration, but seems to have the same result

Seems to be a memory leak, or at least really high memory usage compared to the previous version we tested, because there the mentioned default memry settings were enough previously

Previously used version

3.2.5

https://gerrit.onap.org/r/gitweb?p=cps.git;a=commit;h=3bc22ed0ea833bdb649f393ec20c08dbb1bb7610

Version where we found the fault

3.3.1

https://gerrit.onap.org/r/gitweb?p=cps.git;a=commit;h=8879947bcad66545beba83614d8a3a7327e1889f

Expected behavior:

No OutOfMemoryError when sending in high amount of cmhandles (20k)

Do not have memory leak and higher heap usage than it was before

Similar cmHandle registration performance as before

Reproduction

Configure java heap size to our settings (see above)

Try to register 20000 cmhandles in to ncmp in 100 sized batches

It will be more hours to register it.

Sending in the batches takes even more time.

NCMP logs will be full with OutOfMemory errors

Profiling might be needed to see what is using high amount of memory

Test environment:

Test was performed on a kubernetes cluster without wiremocked elements

However it seems to be independent from the dmi plugins, so it might be reproducible with wiremocked elements too.

Collected logs:

discovery.log![]()

ncmp logs already sent previously, can not attach here because of large size and sensitive content